Hi there,

I have recently performed a metatranscriptomic analysis with the SAMSA2 pipeline (which uses DIAMOND/blastx). With MEGAN UE I have run daa-meganizer (all default settings) on about 40 .daa files using the megan-map-Feb2022-ue.db (10.1GB).

Blockquote

#meganizer output for 1 (relatively small) metatranscriptome file

Functional classifications to use: EC, EGGNOG, GTDB, INTERPRO2GO, KEGG, SEED

Loading ncbi.map: 2,396,736

Loading ncbi.tre: 2,396,740

Loading ec.map: 8,200

Loading ec.tre: 8,204

Loading eggnog.map: 30,875

Loading eggnog.tre: 30,986

Loading gtdb.map: 240,103

Loading gtdb.tre: 240,107

Loading interpro2go.map: 14,242

Loading interpro2go.tre: 28,907

Loading kegg.map: 25,480

Loading kegg.tre: 68,226

Loading seed.map: 961

Loading seed.tre: 962

Meganizing: /projects/soil_ecology/dr359/UM/samsa2/fire_interleaved/step_4_output/daa_binary_files/fire_9.merged.ribodepleted.fastq.nr.daa

Meganizing init

Annotating DAA file using FAST mode (accession database and first accession per line)

Annotating references

10% 100% (3.7s)

Writing

10% 20% 30% 40% 50% 60% 70% 80% 90% 100% (0.2s)

Binning reads Initializing…

Initializing binning…

Using ‘Naive LCA’ algorithm for binning: Taxonomy

Using Best-Hit algorithm for binning: SEED

Using Best-Hit algorithm for binning: EGGNOG

Using Best-Hit algorithm for binning: KEGG

Using ‘Naive LCA’ algorithm for binning: GTDB

Using Best-Hit algorithm for binning: EC

Using Best-Hit algorithm for binning: INTERPRO2GO

Binning reads…

Binning reads Analyzing alignments

10% 20% 30% 40% 50% 60% 70% 80% 90% 100% (15.6s)

Total reads: 2,481,396

With hits: 2,481,396

Alignments: 2,481,396

Assig. Taxonomy: 2,315,108

Assig. SEED: 1,002

Assig. EGGNOG: 769

Assig. KEGG: 742

Assig. GTDB: 155,886

Assig. EC: 272

Assig. INTERPRO2GO: 274,910

MinSupport set to: 248

Binning reads Applying min-support & disabled filter to Taxonomy…

10% 20% 30% 40% 50% 60% 70% 80% 90% 100% (0.2s)

Min-supp. changes: 2,500

Binning reads Applying min-support & disabled filter to GTDB…

10% 20% 30% 40% 50% 60% 70% 80% 90% 100% (0.8s)

Min-supp. changes: 671

Binning reads Writing classification tables

10% 20% 30% 40% 50% 60% 70% 80% 90% 100% (2.0s)

Binning reads Syncing

100% (0.0s)

Class. Taxonomy: 1,238

Class. SEED: 225

Class. EGGNOG: 54

Class. KEGG: 376

Class. GTDB: 147

Class. EC: 187

Class. INTERPRO2GO: 410

Total time: 30s

Peak memory: 5.4 of 32G

Blockquote

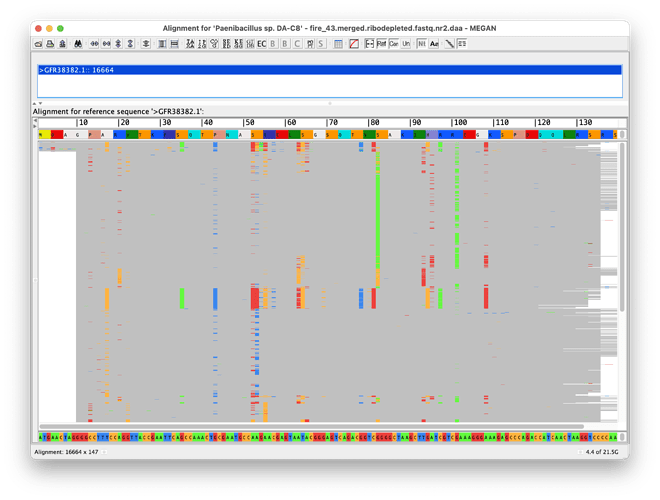

This is… really bad looking read assignment for all functional databases to me. Clearly tax assignment for this sample was fine (2.3 million of 2.4 million), so what have I done wrong for function? In the comparison file of the 36 meganized daa files I have 154 million reads, with only 8,013 KEGG assignments. This data is metatranscriptomes from Illumina HiSeq.

Please let me know if this is something wrong with my daa files, my meganizing, or what?? Ideally a response with code for daa-meganizer if that is the issue.

Thanks in advance!